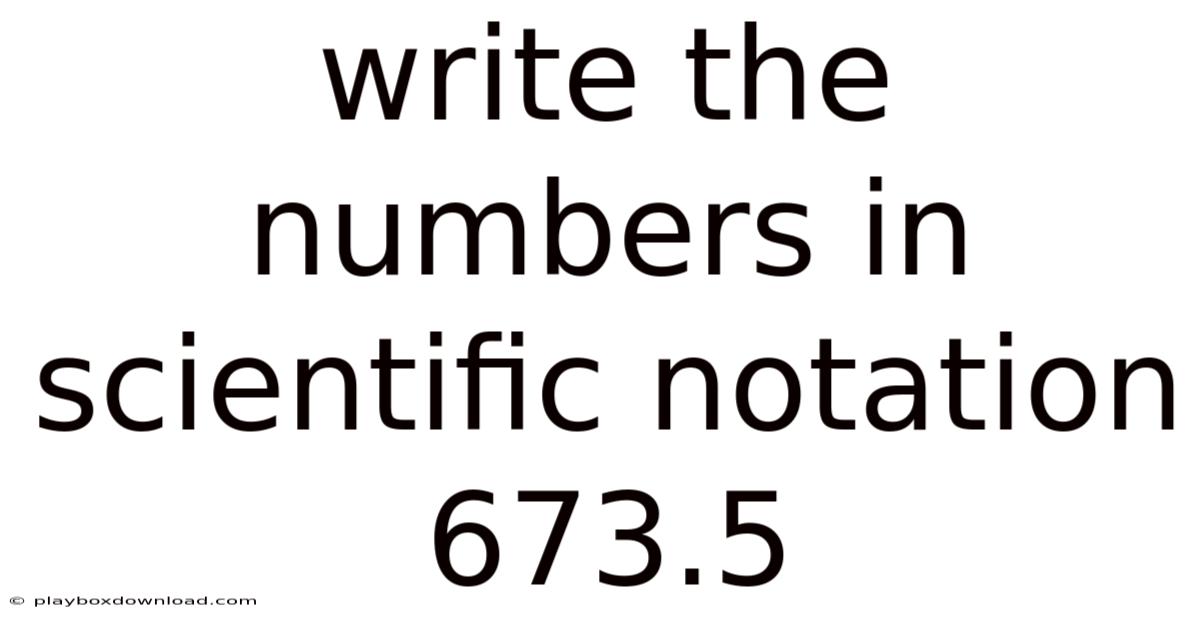

How to Write 673.5 in Scientific Notation: A Step-by-Step Guide

Scientific notation is a powerful tool for simplifying complex numbers, making them easier to read, compare, and calculate. Even so, whether you’re a student tackling algebra or a professional working with large datasets, understanding how to convert numbers like 673. Day to day, this method reduces cumbersome digits into a compact format, ideal for fields like physics, engineering, and data science. 5 into scientific notation is essential. Let’s dive into the process of transforming 673.5 into scientific notation, ensuring clarity and precision at every step Simple, but easy to overlook. Took long enough..

Step 1: Understand the Basics of Scientific Notation

Scientific notation expresses numbers as a product of two parts:

- A coefficient (a number between 1 and 10).

- A power of 10 (written as $10^n$, where $n$ is an integer).

The goal is to rewrite the original number so that the coefficient falls within the 1–10 range, and the power of 10 accounts for the decimal shift. On the flip side, for 673. 5, this means adjusting the decimal point to create a coefficient between 1 and 10.

Step 2: Move the Decimal Point to Create the Coefficient

Start with the original number: 673.5.

- Move the decimal point two places to the left until only one non-zero digit remains to the left of the decimal.

- First move: 67.35

- Second move: 6.735

Now, the coefficient is 6.735, which satisfies the 1–10 rule.

Step 3: Determine the Exponent for the Power of 10

The number of places you moved the decimal point becomes the exponent of 10. Since we shifted the decimal two places to the left, the exponent is +2.

- If the decimal had moved right, the exponent would be negative.

- If the decimal hadn’t moved (e.g., 5.0), the exponent would be

This adjustment ensures the number is expressed in a standardized format. Also, for 673. 5, the exponent is +2, so we write it as 6.735 × 10².

Step 4: Verify the Calculation

Check that multiplying the coefficient by the power of 10 returns the original number:

$6.735 \times 10^2 = 673.5$.

This confirms the conversion is accurate That's the part that actually makes a difference..

Step 5: Apply to Real-World Scenarios

In scientific contexts, such as analyzing experimental data or chemical concentrations, this notation streamlines comparisons and calculations. It also aids in memorizing and communicating precise values efficiently.

By mastering this technique, you’ll enhance your ability to handle numerical data with confidence. Scientific notation not only simplifies calculations but also strengthens your understanding of numerical relationships.

So, to summarize, converting numbers like 673.Plus, it empowers you to work through complex calculations with clarity and precision. 5 into scientific notation is a straightforward yet impactful skill. Embrace this method, and you’ll find it invaluable in both academic and professional settings Small thing, real impact..

Conclusion: Scientific notation is more than a format—it’s a vital skill for simplifying and communicating numerical information effectively.

Handling Extremely Small or Large Numbers

While 673.5 required a positive exponent, scientific notation equally simplifies numbers far smaller than 1. To give you an idea, 0.000045 becomes (4.5 \times 10^{-5}) by moving the decimal right until one non-zero digit remains. The negative exponent reflects this rightward shift. This symmetry allows consistent representation across magnitudes—from atomic scales ((1.67 \times 10^{-27}) kg for an electron) to cosmic distances ((9.46 \times 10^{15}) meters in a light-year) Which is the point..

Common Pitfalls to Avoid

- Incorrect coefficient range: Ensure the coefficient is ≥1 and <10. Writing (67.35 \times 10^1) for 673.5 violates this rule.

- Exponent sign errors: Moving left gives a positive exponent; moving right gives a negative one.

- Counting moves inaccurately: Each shift counts as one, including across zeros (e.g., 0.005 → (5 \times 10^{-3}), not (0.5 \times 10^{-2})).

Beyond Manual Conversion

Modern tools—calculators, spreadsheets, and programming languages—use scientific notation natively (often displaying as 6.735E2). Understanding the manual process, however, builds intuition for error-checking and interpreting data outputs, especially in fields like microbiology (bacterial counts) or astronomy (stellar distances).

Conclusion

Scientific notation distills complexity into clarity, transforming unwieldy numbers into manageable forms. By internalizing its principles—the coefficient’s constrained range and the exponent’s directional logic—you gain more than a computational trick; you acquire a universal language for scale. Whether analyzing quantum particles or galactic structures, this notation empowers precise communication, reduces calculation errors, and reveals the elegant order within numerical diversity. In a world saturated with data, fluency in scientific notation is not merely useful—it is essential for thoughtful engagement with science, technology, and quantitative reasoning Easy to understand, harder to ignore..

Building on this foundation, mastering scientific notation also enhances problem-solving in fields like engineering, physics, and data analysis. Take this: expressing energy values such as 2.5 × 10⁶ joules or force magnitudes like 3.2 × 10⁸ newtons becomes second nature, enabling efficient comparisons and transformations. This skill bridges theoretical concepts with real-world applications, from designing circuits to interpreting climate models Which is the point..

Also worth noting, practicing conversions sharpens your ability to tackle non-standard numbers, whether decimals embedded in scientific formulas or logarithmic scales in data visualization. It fosters a deeper appreciation for the balance between precision and simplicity in scientific communication.

In essence, scientific notation is a cornerstone of numerical literacy. Its mastery not only streamlines calculations but also cultivates a mindset attuned to the nuances of scale—a trait invaluable in navigating challenges across disciplines.

Pulling it all together, embracing scientific notation transforms abstract numbers into actionable insights, reinforcing its role as a critical tool for anyone seeking clarity in quantitative domains. This proficiency ultimately bridges the gap between raw data and meaningful understanding Worth keeping that in mind..

As computational systems grow increasingly complex, the ability to mentally parse exponential magnitudes remains a vital cognitive checkpoint. Think about it: recognizing when a result deviates by an order of magnitude can be the difference between a valid discovery and a costly miscalculation. When algorithms process terabytes of genomic sequences or simulate atmospheric dynamics, the underlying arithmetic still relies on the same base-ten framework. Fluency in this system is therefore less about rote calculation and more about cultivating a quantitative instinct—one that allows practitioners to quickly validate outputs, flag anomalies, and maintain rigor when translating digital results into real-world decisions.

This instinct also proves indispensable as collaborative research continues to dissolve traditional disciplinary boundaries. By providing a consistent reference point, scientific notation minimizes ambiguity, accelerates peer review, and ensures that specialized findings remain accessible to broader audiences. A materials engineer evaluating nanoscale tolerances, a public health official tracking pathogen concentrations, and a financial analyst modeling macroeconomic indicators all depend on the same structural logic to communicate magnitude. In an era where data generation outpaces human processing capacity, this shared mathematical vocabulary acts as a stabilizing force, keeping interpretation grounded and reproducible.

It sounds simple, but the gap is usually here.

The bottom line: scientific notation persists not because it reinvents arithmetic, but because it elegantly addresses a fundamental cognitive constraint: our innate difficulty in intuitively grasping extremes. By compressing unwieldy values into a standardized, scalable format, it transforms overwhelming quantities into tractable concepts. Think about it: mastering this convention equips students, researchers, and professionals with a reliable navigational tool for any numerical landscape, ensuring that as our measurements stretch toward the infinitesimal or the astronomical, our capacity to reason about them keeps pace. In both theoretical exploration and applied problem-solving, this disciplined approach to magnitude remains an indispensable foundation for clear, accurate, and forward-thinking analysis.

The scalability of scientific notation proves equally crucial in technological innovation and engineering design. Whether specifying the tolerances required in semiconductor manufacturing at the nanometer scale or quantifying the immense energy outputs of fusion reactors, this notation provides the necessary precision without overwhelming communication channels. It allows engineers to express critical parameters concisely in blueprints, technical specifications, and control algorithms, ensuring accuracy across teams and manufacturing stages. This precision is not merely academic; it directly impacts safety, efficiency, and the viability of advanced technologies, where errors in magnitude can cascade into catastrophic failures Simple, but easy to overlook..

On top of that, the rise of data science and big analytics underscores the enduring relevance of scientific notation. When visualizing datasets containing billions of entries or modeling phenomena spanning geological epochs, standard numerical forms become impractical. That said, scientific notation enables software engineers and data scientists to handle these vast datasets efficiently within programming languages and visualization tools. Now, it ensures that algorithms can process and represent extreme values correctly, preventing overflow errors and maintaining data integrity. This computational fluency is key for extracting meaningful patterns from seemingly chaotic information streams, turning raw data into actionable intelligence.

Finally, as educational paradigms evolve to highlight quantitative literacy and critical thinking, scientific notation serves as a foundational pillar. It moves beyond rote memorization to encourage an intuitive grasp of scale and proportion, equipping learners with the mental tools to assess plausibility, estimate results, and understand the implications of exponential growth or decay in contexts ranging from population dynamics to viral spread. This cultivated sense of magnitude is essential for navigating an information-saturated world where numerical claims abound.

Conclusion: In essence, scientific notation transcends its mathematical origins to become an indispensable cognitive and communicative framework. Its power lies in its elegant simplicity: compressing the vast spectrum of numerical values into a standardized, scalable language that bridges the gap between human intuition and the extremes of the measurable universe. From validating complex computations and enabling interdisciplinary collaboration to driving technological precision and fostering solid data science, it provides the clarity and rigor needed to transform abstract quantities into concrete understanding. As humanity continues to probe the frontiers of the infinitesimal and the cosmic, and as data complexity intensifies, mastery of scientific notation remains not just a technical skill, but a fundamental competency for clear, accurate, and insightful analysis in an increasingly quantified world. It is the silent translator that ensures our most ambitious measurements and discoveries remain comprehensible and actionable But it adds up..