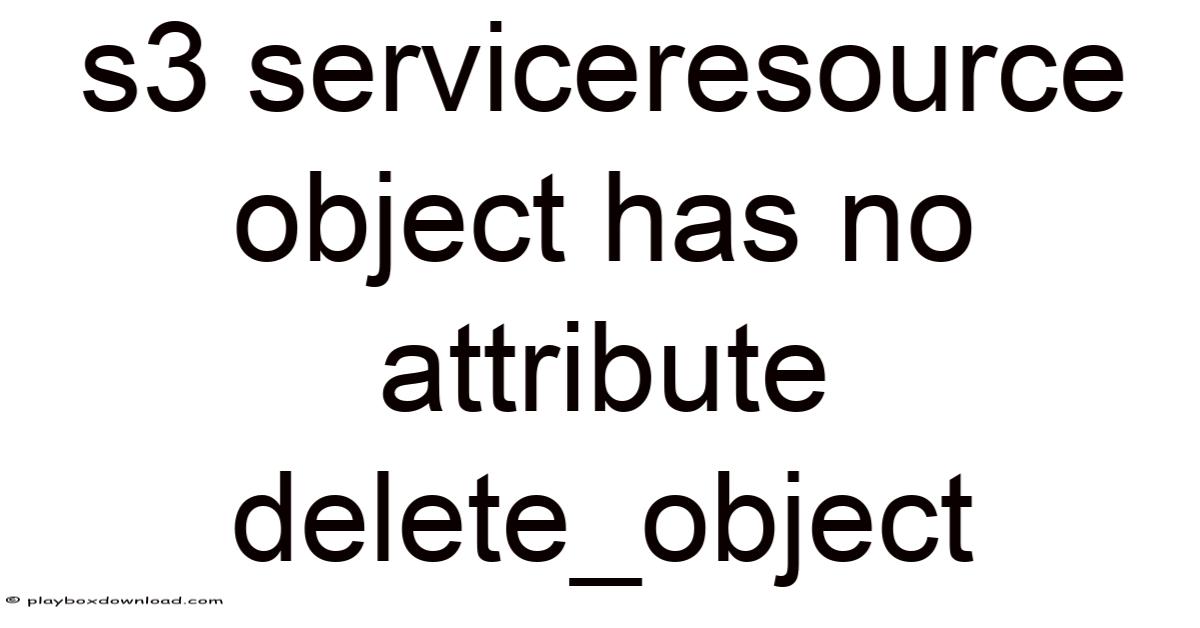

Understanding the limitations of S3 and the implications of the DeleteObject method is crucial for developers and data managers working with Amazon S3. Also, when you encounter the message that the DeleteObject method is unavailable, it often signals a deeper issue in how the service is configured or used. In this article, we will explore the reasons behind this error, its impact on your operations, and how to deal with around it effectively Small thing, real impact..

The first thing to recognize is that the DeleteObject method is not a standard operation in Amazon S3. That said, in the context of Amazon S3, the absence of this method means that there is no direct way to delete objects programmatically. Think about it: this method is typically associated with other services, such as AWS Lambda or AWS Glue, where you can delete objects based on specific conditions. This can be frustrating for developers who expect to manage their data efficiently Simple as that..

Once you find yourself in a situation where the DeleteObject method is not available, Understand the underlying reasons — this one isn't optional. One primary cause is that the S3 bucket may not be configured correctly. That's why for instance, if the bucket lacks the necessary permissions or if the access policies are set improperly, the service may block deletion attempts. This is a common scenario for new users or those who have recently updated their bucket settings Easy to understand, harder to ignore..

Another factor to consider is the version of the AWS SDK you are using. It is crucial to make sure you are using the latest SDK version to avoid compatibility issues. Different SDKs may have varying levels of support for certain operations. If you are using an outdated version, you might encounter limitations that prevent you from deleting objects directly. This update not only improves functionality but also enhances security features that protect your data It's one of those things that adds up. Surprisingly effective..

Also worth noting, the absence of the DeleteObject method can lead to confusion among developers. But many rely on this functionality to manage their storage efficiently, and when it is unavailable, it can disrupt workflows. Plus, this is especially true in environments where data retention policies are strict. Understanding the implications of this limitation is vital for maintaining a smooth operation.

Don't overlook in addition to technical issues, it. But it carries more weight than people think. When you cannot delete objects, it raises questions about data lifecycle management. You may need to implement alternative strategies, such as setting up lifecycle policies or using S3 Lifecycle API to transition objects to cheaper storage classes. This approach can help optimize your storage costs while ensuring that your data remains accessible when needed That's the whole idea..

To address the situation effectively, developers should take a proactive approach. First, verify the configuration of your S3 bucket. see to it that the correct permissions are set, and that the access policies align with your requirements. This step is crucial in preventing future issues related to unauthorized deletions.

Next, consider exploring other deletion methods available in the AWS ecosystem. To give you an idea, you can use the DeleteBucket method if you need to remove an entire bucket, or put to work the AWS CLI commands to manage your storage. These alternatives can provide the flexibility you need while adhering to best practices.

When dealing with the absence of the DeleteObject method, it is also wise to stay informed about updates from AWS. So the company frequently enhances its services, and new features may be introduced to address such limitations. By keeping an eye on these updates, you can adapt your approach and confirm that your projects remain aligned with the latest capabilities Not complicated — just consistent. Turns out it matters..

So, to summarize, the lack of the DeleteObject method in Amazon S3 is a significant challenge that requires careful consideration. By understanding the reasons behind this limitation, implementing alternative strategies, and staying updated with AWS developments, you can effectively manage your data and maintain operational efficiency. Remember, every challenge presents an opportunity to learn and improve your approach to cloud storage management Which is the point..

This article aims to provide you with a comprehensive overview of the situation, equipping you with the knowledge needed to handle around the constraints. Whether you are a developer, a data manager, or simply a curious learner, understanding these nuances will enhance your ability to work with Amazon S3 effectively. With the right strategies in place, you can overcome these obstacles and continue your journey in mastering cloud technologies Small thing, real impact..

Leveraging Versioning as a Safety Net

If your bucket already has versioning enabled, the absence of a direct DeleteObject call becomes less of a roadblock. Worth adding: instead of permanently erasing an object, you can add a delete marker to the latest version. This marker tells S3 to treat the object as deleted for read operations while preserving every prior version in the storage layer Easy to understand, harder to ignore. Which is the point..

aws s3api delete-object \

--bucket my-bucket \

--key path/to/file.txt \

--version-id

By retaining older versions, you gain an audit trail and an easy rollback path—valuable for compliance regimes such as GDPR or HIPAA. So if you later decide the object truly belongs in the trash, you can purge the unwanted versions in bulk using the DeleteObjects API with a list of version IDs. This two‑step approach mirrors the classic “soft‑delete → hard‑delete” pattern common in relational databases Worth knowing..

Automating Lifecycle Rules for Versioned Buckets

When versioning is active, lifecycle policies become even more powerful. You can define separate actions for:

| Object State | Transition Action | Example Rule |

|---|---|---|

| Current version | Move to INTELLIGENT_TIERING after 30 days | Reduces cost for frequently accessed data |

| Non‑current version | Transition to GLACIER after 90 days | Archives older copies cheaply |

| Delete marker | Expire after 7 days | Prevents orphaned markers from inflating storage metrics |

These rules are expressed in JSON and attached to the bucket via the put-bucket-lifecycle-configuration command or through the console. Because the lifecycle engine runs asynchronously, you don’t need to manually invoke delete operations; S3 will take care of the heavy lifting once the conditions are met.

Using Object Lock for Immutable Records

In some regulated environments, you might actually want objects to be undeletable. AWS S3 Object Lock provides WORM (Write‑Once‑Read‑Many) protection, ensuring that once an object is written, it cannot be overwritten or deleted for a defined retention period. When you combine Object Lock with versioning, you get a dependable immutable ledger—ideal for financial statements, medical records, or legal evidence Simple, but easy to overlook..

Worth pausing on this one.

If you encounter a scenario where the DeleteObject method is unavailable because of Object Lock, the correct response is not to fight the restriction but to respect the compliance intent. Instead, focus on:

- Planning retention windows at the time of ingestion.

- Creating new objects for corrected data rather than attempting to modify the locked one.

- Archiving the locked objects to cheaper tiers (e.g., Glacier Deep Archive) once the retention expires.

Monitoring and Auditing Deletion Attempts

Even when you cannot delete an object, it’s critical to know who tried and why. AWS CloudTrail captures every S3 API call, including failed DeleteObject attempts. By routing these logs to Amazon CloudWatch Logs or an SIEM solution, you can:

- Trigger alerts when unauthorized users attempt deletions.

- Correlate deletion attempts with IAM policy changes.

- Generate compliance reports that demonstrate due diligence.

A simple CloudWatch metric filter might look like:

{

"filterPattern": "{ $.eventName = DeleteObject && $.errorCode = AccessDenied }",

"metricName": "UnauthorizedDeleteAttempts",

"metricNamespace": "S3Security"

}

Setting an alarm on UnauthorizedDeleteAttempts ensures that security teams are notified instantly, allowing rapid remediation But it adds up..

Alternative Storage Solutions for “Delete‑Heavy” Workloads

If your application’s core workflow involves frequent creation and disposal of temporary files (e.And g. , video transcoding, batch analytics), S3 might not be the most cost‑effective choice.

| Service | Ideal Use‑Case | Deletion Model |

|---|---|---|

| Amazon EFS | Shared file system for EC2 instances | POSIX delete semantics, instant removal |

| Amazon FSx for Lustre | High‑throughput compute workloads | Native file delete |

| Amazon S3 Object Lambda | On‑the‑fly data transformation | Still subject to S3 delete rules |

| Amazon S3 Batch Operations | Bulk delete of millions of objects | Asynchronous, but still respects bucket policies |

By routing transient data to a service that supports immediate deletions, you can keep your S3 bucket reserved for long‑term, immutable assets, thereby simplifying lifecycle management and reducing unexpected cost spikes.

Summarizing the Playbook

| Step | Action | Tool |

|---|---|---|

| 1 | Audit bucket permissions & IAM policies | AWS IAM, S3 console |

| 2 | Enable versioning (if not already) | aws s3api put-bucket-versioning |

| 3 | Define lifecycle rules for current & non‑current versions | JSON lifecycle config |

| 4 | Implement Object Lock where immutability is required | aws s3api put-object-lock-configuration |

| 5 | Set up CloudTrail & CloudWatch alarms for delete attempts | CloudTrail, CloudWatch Logs |

| 6 | Evaluate alternative storage for delete‑intensive data | EFS, FSx, etc. |

Final Thoughts

The inability to invoke a straightforward DeleteObject call in Amazon S3 is not a dead‑end; it is a design decision that nudges architects toward more deliberate data governance. By embracing versioning, lifecycle policies, and immutable storage options, you transform a perceived limitation into a strategic advantage—enhancing compliance, auditability, and cost efficiency simultaneously.

Remember that every AWS service evolves. Keeping an eye on the AWS What's New feed, subscribing to the S3 blog, and participating in the AWS re:Post community will ensure you are the first to know when new deletion capabilities or API enhancements arrive. With a proactive mindset and the toolbox outlined above, you can confidently work through the constraints, safeguard your data, and continue to extract maximum value from Amazon S3 No workaround needed..