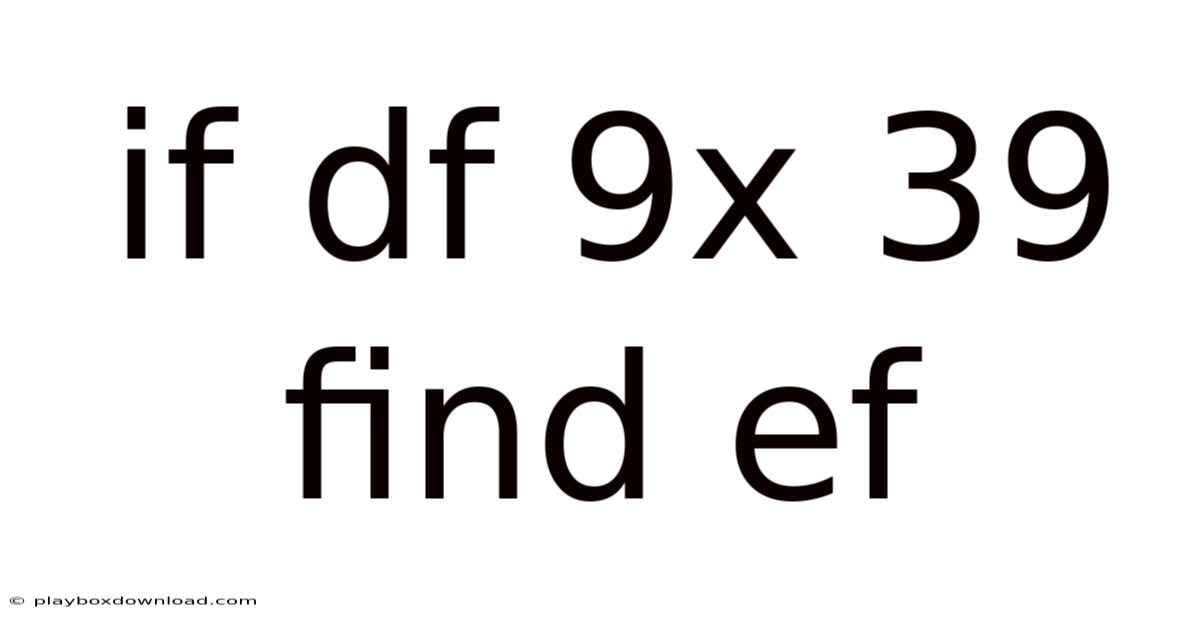

The layered dance between data precision and analytical clarity often defines the success or failure of data-driven projects. The challenge lies not merely in identifying discrepancies but in addressing them effectively to preserve the integrity of the analysis. In the realm of data science and computational analysis, the ability to interpret results accurately is very important. On top of that, this is where the concept of verifying conditions becomes indispensable. And yet, even the most sophisticated tools can falter if not applied with the right understanding and precision. Such a scenario demands careful attention, as even minor deviations can lead to misleading conclusions or wasted resources. When dealing with a dataset structured as a DataFrame, particularly one named df 9x 39 find ef, the task of ensuring consistency and correctness transforms into a critical process. Here, the question of how to confirm whether df 9x 39 find ef adheres to expected criteria takes center stage. Consider the scenario where a dataset, meticulously curated and meticulously organized, holds the key to unlocking insights that could revolutionize industry practices or solve complex problems. Through this process, the reader gains not only a deeper understanding of the technical aspects but also insights into best practices that ensure reliability and robustness in data handling. This exploration looks at the nuances of data validation, offering a roadmap that equips practitioners with the tools necessary to deal with such challenges confidently. The journey begins with grasping the fundamental purpose of such validations, moving forward with a structured approach that balances technical rigor with practical applicability Most people skip this — try not to..

It sounds simple, but the gap is usually here.

Subheadings will serve as guiding pillars, structuring the narrative into digestible segments that maintain focus while allowing for comprehensive coverage. Each section will build upon the previous one, creating a cohesive framework that supports comprehension and application. The first subheading might introduce the significance of data accuracy in modern contexts, setting the stage for why such verifications are essential. Subsequent sections could explore methodologies for execution, common pitfalls to avoid, and strategies for maintaining consistency across diverse datasets. Think about it: by employing a clear hierarchy of information, the article ensures that readers are never left in the dark, receiving a roadmap made for their needs. That's why additionally, the inclusion of practical examples will bridge theoretical concepts with real-world applications, making the content relatable and actionable. Here, the use of bold text will highlight key terms, while italicized phrases can highlight specialized jargon or critical points, enhancing readability. Lists will further illustrate steps or processes, ensuring that information is presented in an organized manner. Such structural choices not only enhance clarity but also reinforce the article’s accessibility, ensuring that even those less familiar with technical terminology can grasp the material effectively.

The process of validating df 9x 39 find ef involves several stages that require meticulous attention. Even so, manual verification remains essential, particularly when dealing with nuanced data types or edge cases that automated tools might overlook. Beyond that, considering the context in which the data was collected is vital. So this dual approach balances efficiency with thoroughness, preventing oversights that could compromise the outcome. Tools such as pandas in Python offer powerful functions for this purpose, enabling users to perform comparisons or statistical tests with ease. That's why recognizing these relationships allows for targeted validation checks. In such scenarios, the practitioner must employ a combination of automated scripts and manual review, ensuring that no detail is missed. On the flip side, for instance, if 9x refers to a numerical value, 39 might denote a categorical label or a specific range, while ef could represent a derived metric or a target variable. That's why if df 9x 39 find ef was derived from a survey, financial records, or experimental results, understanding the source provides additional layers of meaning and context. Initially, understanding the structure of the DataFrame is crucial. Each column represents a specific attribute or metric, and their relationships must be clear to assess potential inconsistencies. Such awareness ensures that validations are not only technically sound but also contextually relevant, aligning with the broader objectives of the analysis.

One of the primary challenges in this process is the variability inherent in data sources. Different datasets may adhere to varying standards, making it difficult to establish universal criteria for validation. To give you an idea, a column labeled 9x might contain both numerical and textual entries, necessitating careful distinction during checks. But similarly, 39 could represent a binary value, a percentage, or an ordinal rank, each requiring distinct validation techniques. Because of that, this variability underscores the importance of flexibility in the approach, allowing practitioners to adapt their methods without compromising the integrity of the process. Additionally, the scale of the data—whether it spans thousands of rows or a limited dataset—can influence the scope of validation efforts Worth keeping that in mind..

Building upon these insights, maintaining clarity becomes a cornerstone of effective communication. Day to day, by prioritizing straightforward explanations and leveraging visual supports, even those new to data analysis can engage meaningfully with the process. Practically speaking, such approaches not only enhance understanding but also empower collaboration, ensuring that the collective effort leads to dependable outcomes. When all is said and done, the goal remains unchanged: delivering results that are both technically sound and accessible, bridging the gap between complexity and comprehension. This commitment ensures that knowledge remains inclusive, fostering trust and alignment across diverse perspectives Turns out it matters..

Scaling Validation Strategies for Larger Datasets

When the data set expands beyond a few hundred rows, the manual‑review component quickly becomes untenable. In these situations, a tiered validation pipeline can keep the workload manageable while preserving rigor:

| Stage | Goal | Typical Tools/Techniques |

|---|---|---|

| 1. Which means schema Enforcement | Confirm that every column adheres to an expected type and naming convention. | pandas.Schema, pandera, SQL CHECK constraints. |

| 2. That's why statistical Sanity Checks | Detect outliers, unexpected distributions, or drift relative to a baseline. Still, | Z‑score filtering, IQR fences, scipy. Now, stats, statsmodels. |

| 3. Worth adding: referential Integrity Audits | see to it that foreign‑key relationships hold across tables or files. | Merge‑based validation, dplyr::anti_join, custom hash maps. |

| 4. Spot‑Check Sampling | Randomly select rows for human inspection to catch edge‑case failures. | df.Now, sample(frac=0. 01), stratified sampling based on key variables. |

| 5. Automated Alerting | Flag any rows that violate pre‑defined thresholds for immediate review. | Logging frameworks, email or Slack notifications via airflow or prefect. |

By automating the first three stages, the analyst can focus human effort on the final two, where nuance matters most. Here's one way to look at it: a row that fails a simple range check may be an entry error, but a row that passes all numeric checks yet contains a contradictory textual note will only be caught during spot‑checking.

Leveraging Domain Knowledge

No amount of code can substitute the insight that comes from understanding the phenomenon behind the numbers. Consider the ambiguous column 9x. In a manufacturing context, 9x might denote a machine identifier; in a clinical trial, it could be a dosage level That's the part that actually makes a difference..

def validate_9x(value, context):

if context == "manufacturing":

return value in VALID_MACHINE_IDS

elif context == "clinical":

return 0 < value <= 500 # mg dosage range

else:

raise ValueError("Unknown context")

Embedding such conditional logic keeps the validation framework extensible and prevents the “one‑size‑fits‑all” pitfall. Beyond that, documenting the rationale for each rule—ideally alongside the code in docstrings or a separate data‑dictionary—creates a living reference that both current and future team members can consult Worth knowing..

Most guides skip this. Don't And that's really what it comes down to..

Visualizing Validation Results

Numbers tell a story, but visual cues accelerate comprehension. After running the validation pipeline, summarizing the findings in a dashboard can surface patterns that raw logs obscure. Common visualizations include:

- Heatmaps of missing‑value frequencies across columns, highlighting systematic gaps.

- Bar charts of rule‑failure counts, ordered to reveal the most problematic fields.

- Time‑series plots of key metrics before and after cleaning, illustrating the impact of the validation steps.

Tools such as Plotly, Altair, or Power BI integrate smoothly with pandas data frames, allowing the analyst to generate interactive reports that stakeholders can explore without needing to read code.

Maintaining a Feedback Loop

Validation is not a one‑off event; it is part of an iterative data lifecycle. After each validation run, the results should feed back into upstream processes:

- Data Ingestion – Adjust ETL scripts to catch recurring errors at source.

- Data Governance – Update policies and data‑quality KPIs based on observed failure rates.

- Model Development – Re‑train predictive models only after confirming that the training set meets the established quality thresholds.

By closing this loop, organizations transform validation from a defensive checkpoint into a proactive quality‑enhancement mechanism.

A Pragmatic Checklist for Practitioners

| ✅ | Action |

|---|---|

| 1 | Define a clear data dictionary that captures the semantics of 9x, 39, find, ef, and any other ambiguous fields. , pandera). But |

| 5 | Conduct stratified spot‑checks, especially on rows that trigger multiple rule violations. |

| 2 | Implement schema checks using a library that can be version‑controlled (e.Even so, |

| 4 | Verify referential integrity across related tables or files. |

| 6 | Produce a concise visualization report and circulate it to both technical and non‑technical stakeholders. On top of that, g. Plus, |

| 7 | Document any rule modifications and the reasoning behind them for future audits. But |

| 3 | Run statistical sanity tests to flag outliers and distributional shifts. |

| 8 | Feed validation outcomes back into data collection protocols to reduce recurrence. |

Concluding Thoughts

Effective data validation is a balancing act between automation and human insight, between rigid rule‑sets and the fluidity required by diverse data sources. By structuring the process into scalable stages, anchoring each rule in domain knowledge, and visualizing outcomes for transparent communication, analysts can safeguard the integrity of even the most heterogeneous data sets—whether they contain cryptic columns like 9x and 39 or more straightforward numeric fields. But ultimately, the rigor we apply today not only prevents errors in the immediate analysis but also cultivates a culture of quality that reverberates through every downstream decision. In a world where data drives strategy, that culture is the most valuable asset of all Worth knowing..